In approximately a decade, financial systems have been subject to two extreme shocks of a very different nature: the Global Financial Crisis and the Covid shock. Risk management models failed in both cases of an endogenous shock (the GFC) and an exogenous shock (the pandemic, coming from outside the perimeter of the economy and the financial system). Whilst the first failure was widely recognised and led to widespread regulatory reviews, the second failure has gone unnoticed, the reason for this being that financial operators fared much better than what models would foresee (models overestimated risks). Modern risk management tools are essential for effective risk control in financial firms, but they are not a silver bullet. We need to recognise this and embrace a holistic approach combining expert judgement, a faster review of assessments and policy responses (as we learn by experience) and, why not, a greater use of intuition.

When I was a young economist in the Research Department of the Bank of Spain, working mostly as an applied macroeconomist, a research paper written by Larry Summers made an impression on me. It was called “The Scientific Illusion in Empirical Macroconomics”.1 Not sure anymore where this article sits in the literature (whether it has lost relevance) or whether his author still stands by it. But the conclusions were very clear: the abstract states that “It is argued that formal econometric work, where elaborate technique is used to apply theory to data or isolate the direction of causal relationships when they are not obvious a priori, virtually always fails. The only empirical research that has contributed to thinking about substantive issues and the development of economics is pragmatic empirical work, based on methodological principles directly opposed to those that have become fashionable in recent years”. I tried since then to be more modest in my research approaches, and most probably failed!

But the quest for the right tone in applied economic research remains elusive. The technological progress, the widespread use of computers, the increase in capabilities of analysing more data, etc., is not helping the pursuit for a sensible strategy in the search for pragmatism. And despite the frequent shocks that have shown the limits of modelling, the overengineering trend continues. For sure, modelling, use of more data, hardware being more capable of dealing with sheer volumes of information, etc., all that is a net positive. But, yet again, the key issue is the risks of overreliance.

The field of finance seemed immune to the scepticism that was widespread in the macro field. The use of daily, even hourly or real time statistical data, seemed to guarantee the analysis was sounder than in the macro field. But then came the Global Financial Crisis, breaking historical correlations within hours. And, of course, models didn´t survive the shock untarnished.

The GFC shock, of an endogenous nature, was followed in less than a decade by the Covid shock, an exogenous shock orthogonal to the functioning of the economy or to the financial sector. In the following lines we will reflect on what the Covid shock has done to risk management models in the field of finance, and whether we are ignoring the challenges posed by the emergence of orthogonal exogenous shocks (such as Covid 19, but also the Russian invasion of Ukraine).

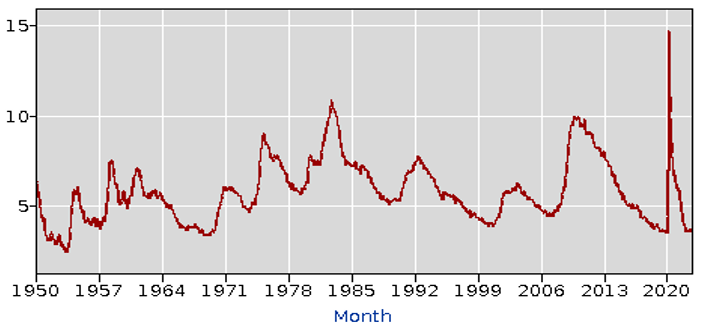

Now that the pandemic is petering out with an O(micron) bang, it is time to look back, but also to look forward. Looking back, we are mesmerised how we have been able to survive not just the pandemic, the lockdowns, but the enormous impact it had on variables like employment and GDP growth. In fact, I do recall the panic I felt when the first US unemployment rate statistics came out: that vertical line was something we have never witnessed. Literally.

Figure 1: US Unemployment Rate (Jan 1950-Nov 2022)

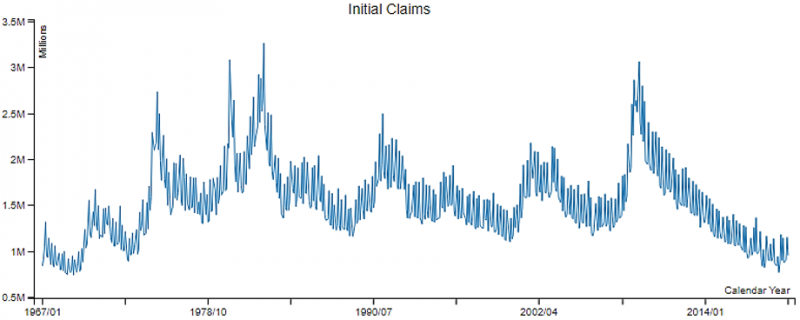

And the weekly series of initial unemployment benefits claims in the US is even more striking. The pre-Covid crisis time series looked like this:

Figure 2: Regular State UI Program Initial Claims (monthly data from 1967-2019)

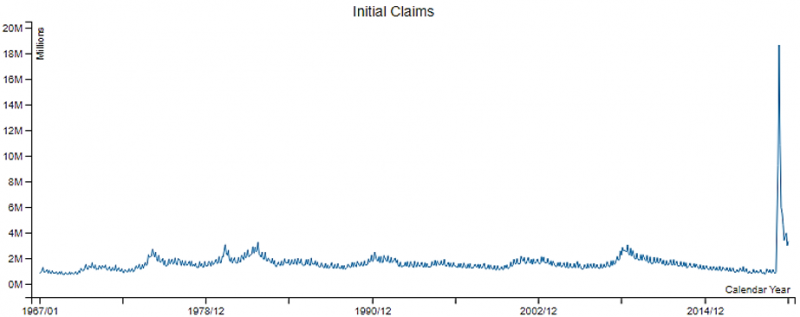

And then came Covid, and the series metamorphosed into this:

Figure 3: Regular State UI Program Initial Claims (monthly data from 1967-2020)

What were my thoughts? Basically, “this is how it ends”. The pandemic was, when looking at the impact, a non-survivable event for the financial system and in particular for banks. After all, the initial impact was bigger by several times than the infamous Great Recession of 2007-2012. Any calculation of the transmission of GDP figures to PDs and LGDs made it impossible to remain, not just optimistic or positive, but even composed.

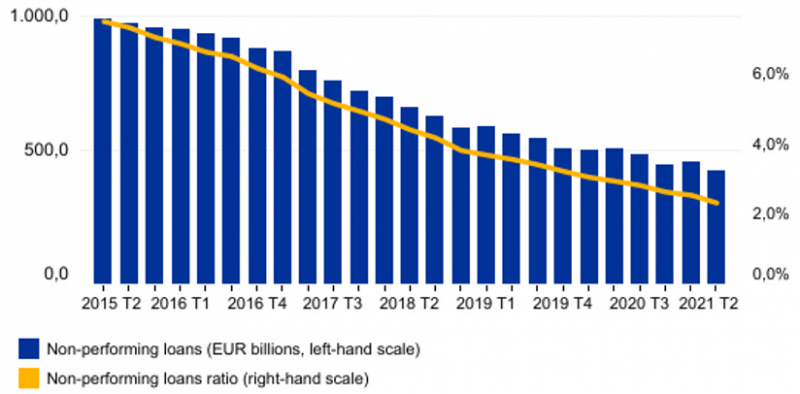

And a few years later, we are still suffering from the last health impact of the pandemic but no impact whatsoever on banks. Not only we do not have any financial stability concerns. Not even the weakest banks went into problems. Of course, we should congratulate ourselves that banks are in a much better shape than in 2007 in terms of capital, of liquidity, but also on risk management grounds. And special consideration needs to be given to the combined effort among governments, central banks and banks to avert a credit squeeze that would have had dire consequences on the economy. And yet, recognizing the importance and adequacy of the measures taken, there is still something puzzling about these positive developments.

Figure 4: Asset Quality—evolution of non-performing loans (2015 Q2 – 2021 Q2)

Source: ECB.

Figure 5: Return on Equity (%)

Source: ECB.

In the coming pages we will try to get rid of this Covid fog and identify the main challenges that for banks´ risk managers in and supervisors this structural change represents. In other words, models’ reliability has been negatively affected in the wake of Covid, making it more difficult to understand risks embedded in banks’ balance sheets. We are referring not just to the complacency towards risks coming from the fact that we have survived an unprecedented crisis with no scars. It is far more relevant the structural break that the crisis introduces in the operation of models: the correlation between GDP evolution and NPLs is broken.

Does the past tell us something about the future? Yes, of course, obviously. Indeed, although individual outcomes do not need to be repeated over time, in economics and finance the past is consistently used to forecast the future. Or, more precisely, data is used to extrapolate trends, be it time series of data, or a mix of time series and cross section data (multiple data points referred to a single point in time).

We all learned the basics in our graduate and postgraduate studies. But we usually stave off the conditions attached to the Data Generating Processes (DGPs), or the unobservable stochastic process that produces the data we observe. In order to extract information from the data we do observe, a few requirements are needed: ergodicity and normal distribution, being the most widely required.

Models are extremely useful to take care of many of the subjective biases we observe in everyday life (in particular, from investment bankers to politicians being too optimistic on future outcomes). But they have limits rooted in the conditions under which they are supposed to work.2

In the field of finance, models are used to manage the asset side for pricing purposes (to compute risk adjusted returns), for risk management purposes (to estimate potential losses), for supervision, in particular for conducting stress tests, and, since Basel II, to compute minimum capital requirements. For instance, for the banking book the well know formula defines the main components of credit risk:3

EL=PD*LGD*EAD

Where EL is expected loss, PD is probability of default, LGD is loss given default and EAD being exposure at (the moment of) default. This formula is the basic underlying tool to manage risk in the banking book.

From this formula you can derive a good number of good management principles. Your pricing needs to cover the expected loss and a reasonable profit margin. You should cover your expected losses with provisions. You have to estimate the recovery value of your loans and advances in case of things going sour. And you need to have capital to protect from worst case scenarios, from unexpected losses (i.e., the worst-case financial loss and/or impact that a business could incur due to a particular loss event or risk realization that is not covered by margins or provisions).

The Global Financial Crisis was nevertheless a stark reminder of the limits of models. When faced with prolonged periods of moderation, models will underestimate, quite severely for some banks and portfolios, losses. Models use past observations, but also weight much more the latest years than remote years. As a result, biases may appear.

That happened in the GFC: no one was predicting the type of losses that were witnessed. The factors were obvious on an ex-post basis. For instance:

The response to the crisis, the regulatory reform, strengthened the financial system in the soft areas shown by the GFC. First and foremost, banks were told to have far more capital, and of better quality (CET1 capital) and far greater liquidity buffers. And there was an intention of strengthening other soft spots, in particular in the shadow banking sphere (reducing the arbitrage incentives by extending bank regulation to bank-like financial institutions managing credit risk) and in financial market infrastructures (forcing more derivatives clearance through Central Counterparty Clearing Houses, for instance). The reforms, no doubt, will be effective at reducing the chances of another meltdown like the one seen during the GFC. But, at the same time, the increase in capital and liquidity buffers and the safety and soundness they bring may create a dangerous false sense of safety.

Models failed during the GFC, and the reasons why they did cannot be corrected through regulations. Some measures, like a greater use of TTC PDs, may help, but they are not a panacea, The financial system is cyclical, booms follow busts, and models based on market inputs will be affected by this cyclical nature. Another problem that surfaced during the GFC is that of interdependencies. Busts give rise to unexpected contagion channels, to interdependencies hidden in the ever-increasing complexity of the extended financial sector that includes, in particular, the shadow banking sector. These interdependencies are not constant over time, meaning that diversification strategies in good times may turn sour in bad times and multiply, rather than reduce, losses.

We have been talking about financial risk modelling, as used by banks and regulated by authorities. This may give the false impression that the private sector has got it wrong, and that the supervisory authorities, the ones that design the top-down stress tests for the system as a whole, know better. That may well not be the case. The failure of models during the pandemic went in parallel with the failure of stress tests.

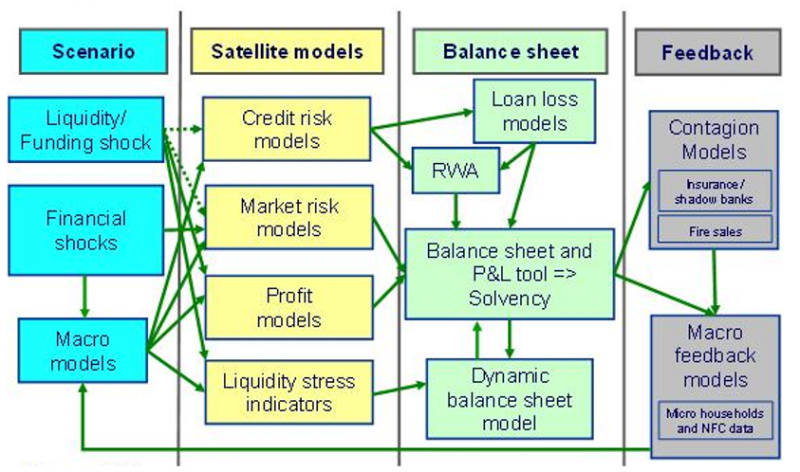

Stress tests start with the definition of harsh, albeit credible, macroeconomic scenarios. The purpose is not designing “end of the world” situations that would wipe out all financial institutions, but simply hardening the bolts of the system and identify where pressure may crack the structure. Once the scenarios are defined, they are mapped to financial institutions balance sheets, using basically the same structure used for models: the mapping of macro scenarios to probabilities of default, the measurement of exposures, the losses in case of loans going sour, etc. And finally, spill overs and contagion effects are factored in. The following chart4 summarises the methodology of Stress Tests.

Figure 6: The four pillar structure of the ECB solvency analysis framework

Source: ECB. Note: “RWA“ refers to risk-weighted assets.

The current era of unprecedented exogenous shocks and black swans also impacts very heavily on the reliability of Stress Tests. First, the design of scenarios becomes far more complicated: maybe modelling an alien invasion or a zombie apocalypse does not sound unrealistic anymore! On a serious note, when the unexpected becomes the norm, designing credible scenarios becomes very difficult. But the design issue is just the first of the problems. The input of scenarios into models is not so easy when dealing with unknown unknowns. And the translation of these into banks´ balance sheets main figures is also a challenge when (non-existing) previous experience does not facilitate the design of a transition matrix.

Should we just reject the measurement of risks within the financial sector? No, by no means we should go down this route. On the contrary, we should use risk aggregation and risk identification techniques that the IT revolution is bringing about to the maximum extent possible. A prerequisite for risk management is a timely identification of risks.

But, at the same time, we should not fall into the trap of believing that technology eliminates risk mismanagement as the main source of financial failures. Or, on the side of supervisors, that thanks to technology they have a complete control of the dynamics between the economy and the financial sector. The scientific illusion of perfect foresight has been teared down by the GFC and the pandemic. And, thank God, the impact in the case of the pandemic went the right way: the effects of COVID 19 on the financial system have been far more moderate than initially foreseen.

Going forward, we should then embrace state of the art risk management techniques, but recognise that we live in a world of black swans, most of them of an exogenous nature (a virus, a war, etc.). In this world, a greater use of expert judgement, a faster review of assessments and policy responses (as we learn by experience) and, why not, a greater use of intuition will be needed to ensure we can deal with future challenges.

Finally, we should also try to simplify our methods and make a better use of the scarce resources of firms and supervisors. We must recognise there is no silver bullet, no magic method to manage risks in periods of uncertainty. We should then try not to measure risks in nanograms in the denominator of the capital adequacy formula (in the RWAs) and concentrate instead in having a sound numerator, volumes of capital high enough to navigate these uncharted waters. In the end, the greatest trap we could fall into is that of complacency: the moderate impact of Covid on banks´ balance sheets, profitability and capital adequacy means that we have lost the compass of risk management models. Rather than being complacent, we should raise our alert levels until we have a better understanding of Covid´s moderate impact.

The Scientific Illusion in Empirical Macroeconomics, Lawrence H. Summers (1991), Scandinavian Journal of Economics 93(2),129-148.

When finalizing this article, a very interesting book (“The Illusion of Control”, by Jón Daníelseson, Yale University Press, 2022) came to my attention. It mentions, among other deeply interesting issues, a case in which someone, by not following the result of the model, saved humanity (you will have to buy the book if you want to know more!).

The major risks for Banks are credit risk, market risk, interest rate risk, operational risk and liquidity risk, but the list seems to increase over time, with cyber risk, climate change (ESG) risk or “step-in risk” being added to the list lately. And let´s not forget the unquantifiable, but extremely important, reputational risk.

“The role of stress testing in supervision and macroprudential policy”, keynote address by Vítor Constâncio, Vice-President of the ECB, at the London School of Economics Conference on “Stress Testing and Macroprudential Regulation: a Trans-Atlantic Assessment”, London 29 October 2015.